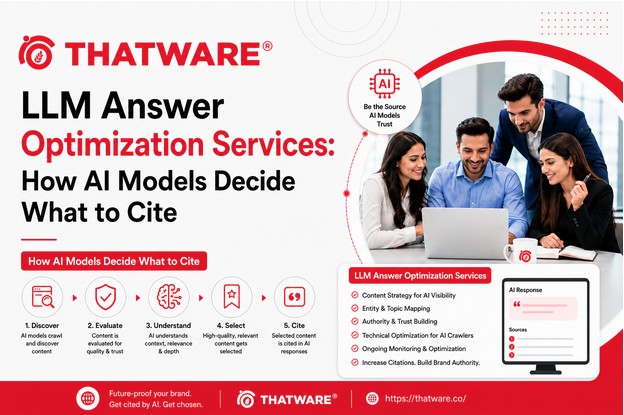

Most brands think about AEO from the outside: how do we appear in AI answers? But understanding why AI models cite some sources and not others requires going a level deeper — into how these systems actually process information and form responses.

Once you understand the mechanics, the strategy becomes a lot clearer.

How LLMs Form Responses

A large language model doesn’t “search” for answers the way Google does. It generates responses based on patterns learned during training — essentially, it’s completing a probabilistic sequence of text that’s likely to be accurate and helpful given the query and context.

That sounds abstract, but the practical implication is important: what an LLM “knows” about your brand — and how confidently it knows it — is a function of how often and how consistently your brand appeared in the data it was trained on, and in the data it retrieves at inference time (for systems with retrieval-augmented generation).

If your brand appeared in high-quality, credible sources across the training corpus with consistent, clear descriptions, the model develops a strong internal representation of what your brand is. If your brand appeared infrequently, inconsistently, or mostly in self-published content — the model’s picture of you is fuzzy, and citation confidence drops.

Training Data vs. Real-Time Retrieval

Modern AI systems for search typically combine two information sources: their training data (what the model learned during training) and real-time retrieval (web content fetched at the moment of responding).

This distinction matters for optimization strategy.

For training data influence, the levers are: building presence in major publications, industry media, Wikipedia, and other sources that models are likely to have trained on. This is a long-cycle investment — training happens infrequently, and influence on it requires consistent external presence built over time.

For real-time retrieval, the levers are more immediate: having well-structured, indexed, frequently-updated content on your website and in credible external sources that retrieval systems can access and surface.

Professional LLM answer optimization services address both — not just one or the other.

The Credibility Signals LLMs Weight Heavily

Based on what we understand about how these models work, several signals appear to increase citation likelihood:

Source authority. Content from established, well-regarded publications carries more weight than content from low-authority sources. Being cited in The Wall Street Journal or a major industry publication creates a different signal than a mention in a random blog.

Factual consistency. When the same claims about your brand appear consistently across multiple sources, the model develops higher confidence that those claims are accurate. Contradictions or inconsistencies reduce that confidence.

Specificity and directness. Models tend to cite sources that directly and specifically answer the query — not sources that address the topic generally. Content that says “X is best for Y use case because of Z specific reason” is more citable than content that discusses X in vague, general terms.

Entity clarity. Brands that exist as well-defined entities in structured knowledge systems are more reliably cited because the model can confidently connect the source to a known entity, reducing ambiguity about who or what is being referenced.

Retrieval-Augmented Generation: The Architecture That’s Reshaping AEO

Many of the most important AI search systems today use what’s called retrieval-augmented generation (RAG). Rather than relying purely on training data, these systems fetch relevant documents from the web or a curated database at query time, then generate a response that synthesizes those retrieved sources.

Perplexity is a prominent example. Google’s AI Overviews also incorporate real-time retrieval. ChatGPT with browsing enabled does as well.

For brands trying to appear in these systems, RAG means that on-site content quality and indexability matter a lot — perhaps more directly than training data influence. If your content can be retrieved, is well-structured, and directly answers the query, a RAG-based system can surface and cite it within minutes of it being published.

This is actionable. It means high-quality, well-optimized content can produce real AEO results faster through RAG-based systems than waiting for training data cycles to incorporate your brand presence.

What Optimization Services Actually Do With This Understanding

The best AI answer engine optimization agency services translate these mechanics into practical program design.

Content is structured for both training data credibility (authoritative, well-sourced, consistently accurate) and retrieval-time performance (clearly structured, passage-level optimized, directly responsive to query formats). Off-site citation building targets the specific publications and sources that RAG systems prioritize in their retrieval indices. Entity optimization ensures your brand is correctly represented in the structured data signals that both training and retrieval systems use to understand who you are.

The brands that emerge as the default AI recommendation in their categories aren’t there by accident. They’ve built the information ecosystem that makes LLM confidence possible.

Understanding how these systems work is the first step. Building the content, entity presence, and off-site authority to capitalize on that understanding is the work.

That work is what serious LLM optimization services do — and why it produces results that surface-level AEO approaches can’t match.